With the recent trend and growth in monitoring tools, security and surveillance, TAP aggregation has become a necessity. We recently had a look at BigSwitch BigTap which is their tap aggregation solution, we put this through some basic load and functional testing with Trex…

BigTap Overview

BigTap is essentially a production-grade SDN solution on commodity bare-metal switches providing tap aggregation capabilities. More detail on the current software version can be found here: Big Tap Monitoring Fabric

The solution provides some filtering capabilities along with the ability to scale 10, 40, 100G taps to any collector or device at multiple points along with line rate replication of traffic.

The Setup

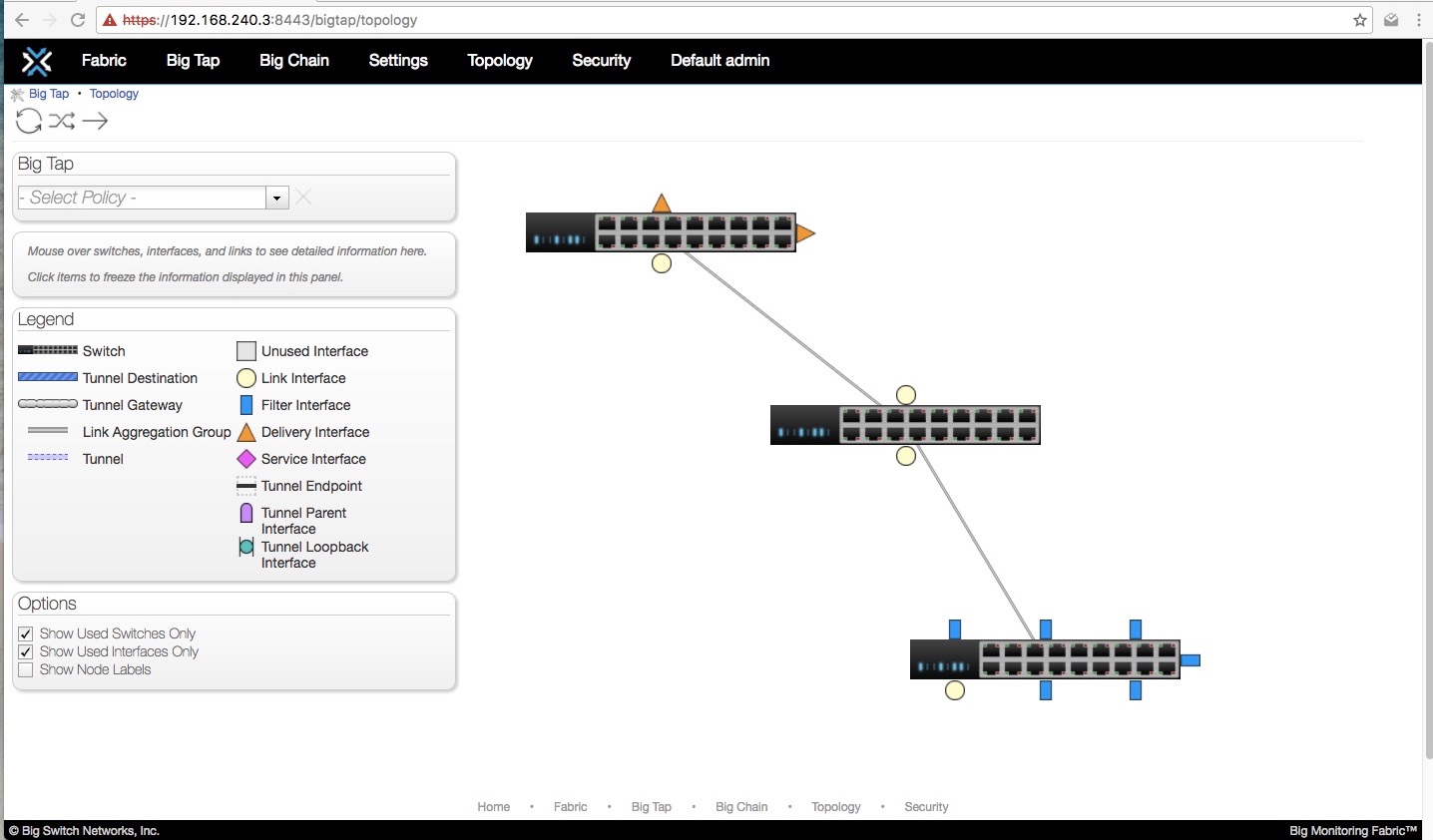

The basic design we tested consisted of three switches running BigTap

| Device | Interface | Purpose |

|---|---|---|

| Switch_2 | ethernet1 | Filter 10Gb |

| ethernet2 | Filter | |

| ethernet3 | Filter | |

| ethernet4 | Filter | |

| ethernet17 | Filter | |

| ethernet18 | Filter | |

| Switch_3 | ethernet3 | Delivery 10Gb |

| Switch_1 | ethernet53 | Core 10Gb |

| ethernet54 | Core |

Load Generator

We will use Trex for the purpose of this test, combining the six filter (ingress) interfaces, sending all this traffic across the core interfaces and out the delivery ports where we have a nProbe collector which displays netflow data about the traffic being collected. (The nProbe server can handle only ~ 8Gbps)

For the test we ran both stateful and stateless traffic profiles, the printed results and video below are of the stateless profile as we could generate the most throughput with this load.

Trex interface config file we used included 4x Intel X710 NICs on one server and 2x Intel X710 NICs on the other. Total of six interfaces generating traffic on 2x HP DL380 G7 servers Details of the trex_cfg.yaml can be found below, which also includes some CPU / thread tweaks to pin NICs to cores as to not overload the CPU and try obtain the best performance.

### Config file generated by dpdk_setup_ports.py ###

- port_limit: 4

version: 2

interfaces: ['0b:00.0', '0b:00.1', '0e:00.0', '0e:00.1']

# interfaces: ['0b:00.0', '0b:00.1']

port_info:

- dest_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x85] # MAC OF LOOPBACK TO IT'S DUAL INTERFACE

src_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x84]

- dest_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x84] # MAC OF LOOPBACK TO IT'S DUAL INTERFACE

src_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x85]

- dest_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x99] # MAC OF LOOPBACK TO IT'S DUAL INTERFACE

src_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x98]

- dest_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x98] # MAC OF LOOPBACK TO IT'S DUAL INTERFACE

src_mac: [0x3c, 0xfd, 0xfe, 0xa0, 0x25, 0x99]

c: 7

platform:

master_thread_id: 0

latency_thread_id: 8

dual_if:

- socket: 0

threads: [1, 2, 3, 4, 5, 6, 7]

- socket: 1

threads: [9, 10, 11, 12, 13, 14, 15]

Traffic Profile

The traffic profile used for testing was a simple three flow imix profile. All UDP 1514 byte packets, this was also mixed during other tests with multiple flows and packet sizes.

from trex_stl_lib.api import *

# IMIX profile - involves 1 stream of UDP packets

# 1 - 1514 bytes

class STLImix(object):

def __init__ (self):

# default IP range

self.ip_range = {'src': {'start': "16.0.0.1", 'end': "16.0.0.254"},

'dst': {'start': "48.0.0.1", 'end': "48.0.0.254"}}

# default IMIX properties

self.imix_table = [ {'size': 1514, 'pps': 500000, 'isg':0 }]

def create_stream (self, size, pps, isg, vm ):

# Create base packet and pad it to size

base_pkt = Ether()/IP()/UDP()

pad = max(0, size - len(base_pkt)) * 'x'

pkt = STLPktBuilder(pkt = base_pkt/pad,

vm = vm)

return STLStream(isg = isg,

packet = pkt,

mode = STLTXCont(pps = pps))

def get_streams (self, direction = 0, **kwargs):

if direction == 0:

src = self.ip_range['src']

dst = self.ip_range['dst']

else:

src = self.ip_range['dst']

dst = self.ip_range['src']

# construct the base packet for the profile

vm =[

# src

STLVmFlowVar(name="src",min_value=src['start'],max_value=src['end'],size=4,op="inc"),

STLVmWrFlowVar(fv_name="src",pkt_offset= "IP.src"),

# dst

STLVmFlowVar(name="dst",min_value=dst['start'],max_value=dst['end'],size=4,op="inc"),

STLVmWrFlowVar(fv_name="dst",pkt_offset= "IP.dst"),

# checksum

STLVmFixIpv4(offset = "IP")

]

# create imix streams

return [self.create_stream(x['size'], x['pps'],x['isg'] , vm) for x in self.imix_table]

# dynamic load - used for trex console or simulator

def register():

return STLImix()

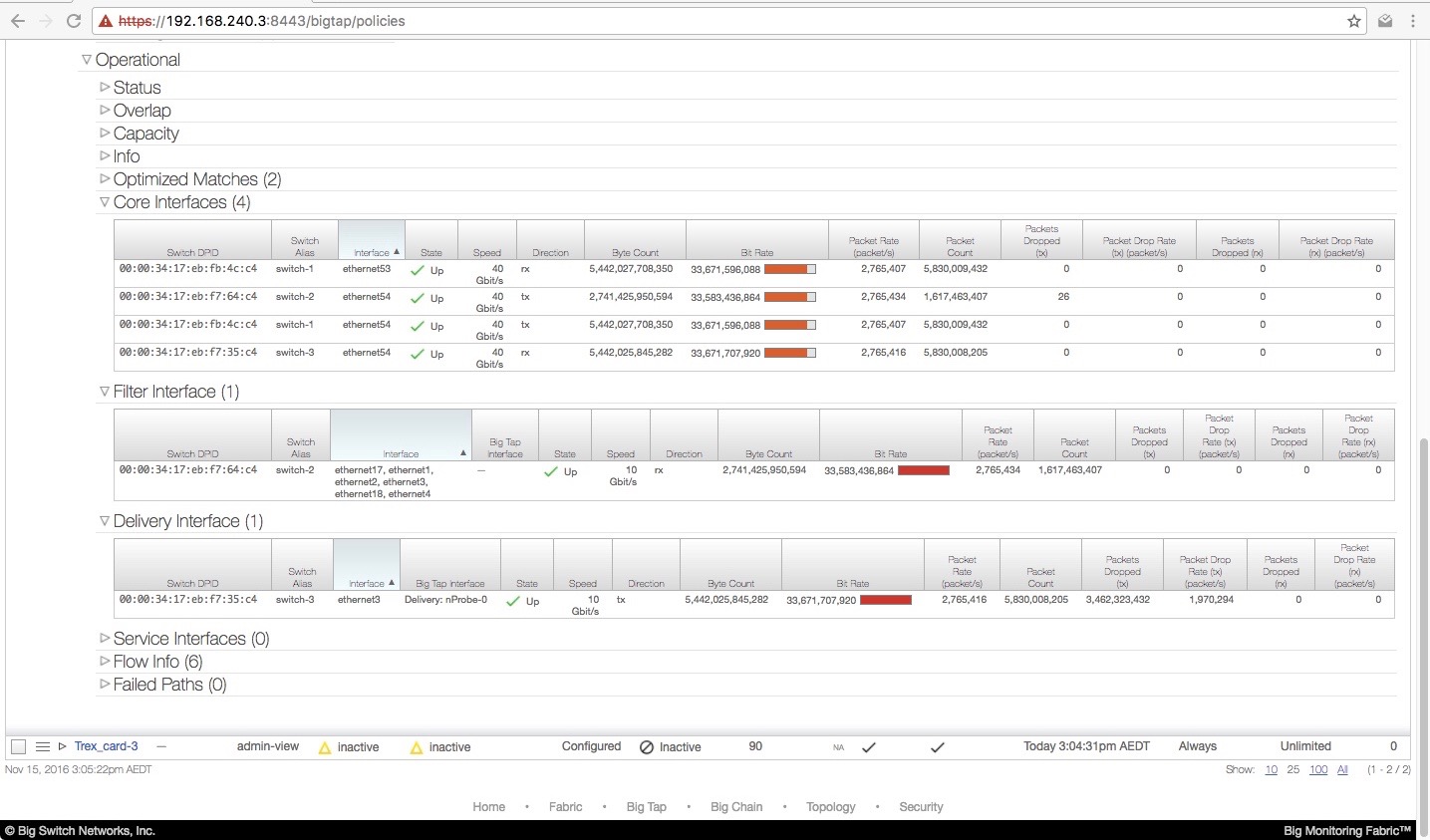

Test Results

Overall performance was fine with the core interfaces pushing all 33Gbps from the filter ports to delivery without any drops. We could split this into seperate policies within BigTap and have three filter interfaces push to one delivery and the other three filter to another delivery interface. This could be stopped, modified and re-started again without issue.

Changing filter interfaces to delivery and visa versa while a seperate active policy was in place pushing ~ 20Gbps also worked without delay or packet loss to the untouched policy (using the same core interfaces)

Sample output of the flows in flight and BigTap GUI output during the test:

As indicated in the output the only drops are when we push the entire 33Gbps towards a single 10Gbps interface (delivery) which is expected.

Result Notes

A few points we noted during the test with the switches and particularly the GUI

- The GUI will not refresh automatically for interface statistics, this needs to be done manually via the ‘refresh’ icon at the top of the page.

- There is a ramp up time in displaying stats onto the GUI post refresh (not sure what the sampling rate is)

- The CLI does not provide a bps or Mbps rate per interface, rather a simple bytes in and bytes out

Configuration note for multiple policies sharing core interfaces on BigTap When we had more than one policy sharing the same core interfaces and different filter / delivery interfaces, this configuration was causing inaccurate forwarding and results. The first policy (policy with higher priority) would take three times the amount of traffic out the delivery interface, and the second policy would have half the expected rate…

This was overcome by adding VLAN-Tag / Rewrite to each policy, once we enabled VLAN rewrite per policy and configured different VLAN tags per policy all forwarding behaviour worked as expected. This looks to be a standard configuration item which needs to be followed.